Author: Ben Selwyn | Posted On: 20 Apr 2026

The adoption gap between AI in daily life and AI in financial decisions has less to do with capability than with accountability.

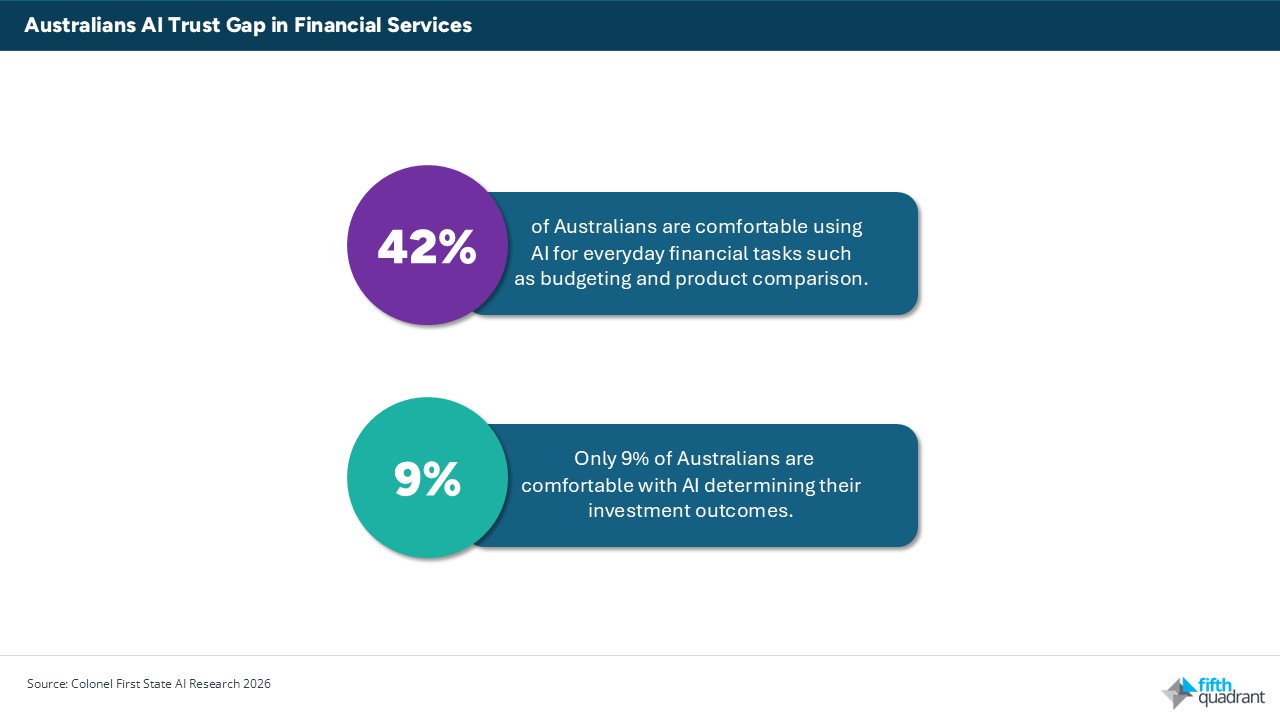

Colonial First State recently commissioned research showing that only 9% of Australians are comfortable with AI determining their investment outcomes. The same organisation is several years into a partnership with Microsoft Azure OpenAI that embeds artificial intelligence across its operations. That contradiction is not unique to CFS. It is the defining tension of financial services in 2026: institutions are deploying AI faster than their customers are prepared to accept it, and the gap between the two is widening.

The irony sharpens when set against everyday Australian behaviour. More than a quarter of Australians use AI daily, well above many comparable economies. But something breaks down when the stakes shift from time to money. Daily life, it seems, is a reasonable domain for AI. Financial futures are not. That gap is not closing on its own. Australian financial services firms are already using AI at above-average rates globally, yet consumer trust in AI for financial decisions sits among the lowest of any country surveyed.

The line between information and advice

42% of Australians are comfortable using AI for everyday financial tasks such as budgeting and product comparison. That number collapses to 9% for investment decisions. This is not a smooth spectrum, but a sharp disconnect, and it maps almost exactly onto the legal distinction between financial information and financial advice.

People will accept AI as a research tool. They will not accept it as an adviser. Importantly, this is not about capability. Consumers typically don’t have strong views about whether AI is technically competent to assess a portfolio. What they have is accountability anxiety. When an AI recommendation leads to a poor financial outcome, it is genuinely unclear who is responsible: the algorithm’s designers, the firm that deployed it, the adviser who used it, or none of the above. That ambiguity is what consumers are responding to, even when they cannot articulate it in those terms. It also explains why transparency disclosures have done little to move the dial. Telling consumers that AI is involved is not the same as telling them who is responsible if it goes wrong.

A governance gap the market hasn’t closed

The industry’s response to the trust problem has largely been to move fast and manage sentiment later. ASIC’s 2024 review found that 61% of the licensees it examined planned to increase AI use within 12 months, at the same time that widespread governance gaps were identified. ASIC’s 2026 Key Issues Outlook names agentic AI as a systemic risk on par with cyber threats and crypto volatility.

Reflecting these concerns, when asked what would shift their willingness to engage with AI-driven financial tools, Australians put clearer regulation at the top, ahead of improved data security and the ability to opt out of automated services. The expectation is that accountability comes first. That is not a demand for less AI. It is a demand for a system in which someone is answerable when things go wrong.

Looking ahead

A 2025 KPMG and University of Melbourne study of 48,000 people across 47 countries found that only 30% of Australians believe AI’s benefits outweigh its risks, the lowest share of any country surveyed. That scepticism predates the current wave of financial services AI deployment and is unlikely to fade simply because the technology improves. Australian scepticism about AI in high-stakes decisions runs deeper than in most comparable economies. Capability improvements may actually widen the trust gap if governance doesn’t keep pace, because more powerful systems raise the stakes of accountability failures.

What will close the gap is accountability infrastructure: regulatory clarity about who is liable when AI-assisted advice causes harm, and industry norms that make that liability visible to consumers before something goes wrong, not after. The institutions that move on this now, rather than waiting for regulation to compel them, are the ones most likely to earn durable trust. That is the real competitive question for financial services in 2026.

Closing the AI Trust gap in financial services will require more than better technology, it demands deeper insight into evolving consumer expectations and regulatory shifts. Fifth Quadrant’s financial services market research equips institutions with the evidence and foresight needed to navigate this transition, build accountability frameworks, and strengthen trust. Connect with our team to understand how your organisation can move ahead of the curve and turn trust into a competitive advantage.

Posted in Financial Services, Consumer & Retail, TL, Uncategorized